Table of Contents

ToggleWhat is a remote OSCE and how does it differ from traditional OSCEs

Objective Structured Clinical Examinations, known as OSCEs, assess clinical competence by observing how candidates perform across multiple structured stations. Many institutions now adopt remote OSCEs to respond to changing educational needs. Each station targets a specific task such as history taking, communication, or clinical reasoning. Educators introduced this format to solve long-standing problems in traditional clinical exams, where differences in patients and examiners often produced inconsistent results.

In a standard OSCE, candidates rotate through timed stations and interact with simulated or real patients in controlled settings. Examiners use predefined checklists and rating scales to score performance. This structure lets educators assess a wide range of skills while keeping the level of difficulty consistent for all candidates. As a result, medical schools widely adopted OSCEs as a reference standard for performance-based assessment.

A remote OSCE, also called a web-based or virtual OSCE, keeps this structure but moves all interactions online. Candidates, examiners, and simulated patients connect through video platforms, and coordinators manage stations through virtual breakout rooms. Despite this shift, educators still aim to evaluate observable clinical performance in a consistent and repeatable way.

However, the medium changes how interactions unfold. Candidates communicate through a screen, which affects how they gather information, express empathy, and manage the flow of the consultation. For example, they often replace physical examination with verbal reasoning based on provided findings. At the same time, teams handle timing, scoring, and feedback through digital systems instead of physical movement between stations.

Because of these differences, educators should not treat remote OSCEs as direct equivalents of in-person exams. Instead, they should view them as an adaptation of the same assessment model to a different interaction environment, where some skills become more visible and others harder to assess.

Remote OSCE vs in-person OSCE: key differences in assessment quality

Although remote and in-person OSCEs share the same structural foundation, their differences affect how clinical competence is observed and interpreted. These differences are not only technical but also influence the reliability and validity of the assessment itself.

One of the most significant distinctions lies in observation. In a traditional OSCE, examiners assess both verbal and non-verbal behavior in a shared physical space. Subtle cues such as posture, proximity, and physical interaction contribute to the evaluation of communication and professionalism. In a remote setting, these cues are filtered through a camera, which limits the examiner’s ability to capture the full interaction. As a result, assessments rely more heavily on verbal clarity and structured responses.

Standardization, which is a core strength of OSCEs, is affected differently in each format. In-person OSCEs control variability through station design and examiner training. Remote OSCEs add another layer of variability related to technology, including internet stability, camera positioning, and audio quality. While structured workflows and rehearsals can mitigate these risks, they introduce dependencies that do not exist in physical environments.

Another key difference concerns performance authenticity. OSCEs have always involved an element of staged interaction, where candidates perform expected clinical behaviors under observation. In remote settings, this dynamic becomes more pronounced because candidates operate in less controlled environments and may rely more on scripted communication patterns. This can make it more difficult to distinguish between genuine clinical reasoning and rehearsed responses.

Finally, the scope of assessment changes. In-person OSCEs can evaluate a full range of competencies, including physical examination and procedural skills. Remote OSCEs tend to focus on history taking, communication, and clinical reasoning, since these translate more directly to video-based interaction. Evidence from early implementations shows that most learning objectives can still be achieved, but practical skills often require alternative assessment methods.

Taken together, these differences do not invalidate remote OSCEs, but they do shift what is being measured. Therefore, understanding these shifts is essential before designing or interpreting any remote clinical assessment.

What skills can and cannot be assessed in a remote OSCE

Remote OSCEs shift the focus toward what examiners can clearly observe on screen. Educators can assess history taking, clinical reasoning, and decision making with little loss. Candidates still show how they gather information, structure their thinking, and explain next steps.

Examiners can also evaluate communication skills, although the format changes how these appear. Candidates must look at the camera to simulate eye contact, which can create confusion during scoring. As a result, examiners need to adjust how they interpret behaviors like empathy and engagement.

However, remote OSCEs limit physical examination and procedural skills. Candidates often describe what they would do instead of performing the action. This allows examiners to assess reasoning, but not execution. As a result, educators cannot fully evaluate hands-on competence in this format.

Non-verbal behavior also becomes harder to judge. In a traditional OSCE, examiners observe posture, movement, and spatial awareness. In a remote setting, the camera restricts these signals. This pushes candidates to rely more on verbal performance, which can make interactions feel more scripted than real.

Overall, remote OSCEs work best when they focus on thinking and communication skills. They become less effective when they try to assess physical or embodied aspects of clinical work.

Challenges and limitations of remote OSCEs in medical education

Remote OSCEs introduce challenges that go beyond technology. These challenges affect how examiners interpret performance and how reliable the results are.

Technical dependency

Remote OSCEs depend on stable internet, clear audio, and proper camera setup. When any of these fail, the interaction breaks down. Even small delays or poor sound quality can change how examiners perceive a candidate’s performance.

A candidate begins a station on breaking bad news. Midway through the interaction, their audio starts to cut out. The simulated patient asks for clarification twice, and the examiner cannot clearly hear the candidate’s response. The candidate shortens their explanations to compensate, which makes the interaction appear abrupt and less empathetic. In this case, the assessment reflects the connection quality as much as the candidate’s skill.

Loss of observational depth

Examiners rely on more than words during an OSCE. They watch posture, eye contact, and physical presence to judge professionalism and communication. Remote OSCEs reduce these signals to what fits inside a camera frame. As a result, examiners depend more on verbal responses and less on overall interaction.

Standardization risks

Traditional OSCEs control the environment tightly. Remote OSCEs place candidates in different locations, each with different lighting, noise, and setup. These differences introduce variability that educators cannot fully control. Even with clear instructions, conditions remain uneven.

Impact on assessment accuracy

OSCEs already involve some level of performance, where candidates show expected behaviors under observation. In remote settings, this effect increases. Candidates rely more on structured responses and less on natural interaction. This makes it harder for examiners to distinguish between genuine understanding and rehearsed answers.

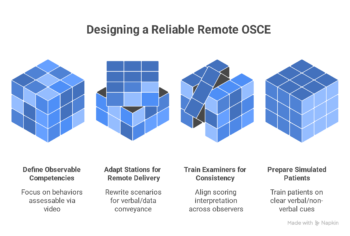

How to design a reliable remote OSCE

Designing a remote OSCE requires more than transferring existing stations to a video platform. The reliability of the assessment depends on how well the design aligns with what can be observed and measured in a remote setting.

Designing for observable behavior

The first step is to define observable competencies. Each station should focus on behaviors that can be clearly assessed through video interaction. For example, communication, clinical reasoning, and structured history taking remain appropriate targets. In contrast, competencies that rely on physical interaction should be redesigned or relocated to other assessment formats.

Adapting stations for remote delivery

Next, stations must be adapted for remote interaction. This involves rewriting scenarios so that all necessary information can be conveyed verbally or through provided data. Instructions to candidates should be explicit, and prompts for simulated patients must be calibrated to ensure consistent responses across sessions. This level of scripting is essential to maintain standardization.

Training examiners for consistency

Examiner calibration is also critical. OSCEs rely on consistent scoring across multiple observers, yet remote conditions introduce new sources of variability. Training sessions should address how to interpret behaviors on screen, including how to assess eye contact, pauses, and communication flow. Without this alignment, scoring differences can increase even if the checklist remains unchanged.

Running pilot and dry runs

Simulated patient preparation must also be adjusted. Remote delivery changes how patients express symptoms, emotions, and cues. Training should focus on clarity of verbal expression and controlled use of non-verbal signals within the frame of the camera. Inconsistent portrayal directly affects the fairness of the assessment.

Finally, pilot testing is essential. A full rehearsal allows educators to identify technical and procedural issues before high-stakes implementation. Dry runs help validate timing, transitions between stations, and scoring workflows, which are all more complex in a digital environment.

Maintaining assessment validity and reliability

Validity and reliability are central to any OSCE, and their preservation becomes more complex in remote formats. While the structure of the OSCE supports consistent measurement, its effectiveness depends on how well the implementation reflects real clinical performance.

Construct validity refers to whether the assessment measures what it intends to measure. In remote OSCEs, this is challenged by the absence of physical interaction and reduced environmental realism. For example, assessing clinical examination skills through verbalization may capture reasoning but not execution. This creates a gap between observed performance and real-world competence.

Reliability, particularly inter-rater reliability, also requires attention. Traditional OSCEs improved reliability by using multiple stations and standardized scenarios. However, remote settings introduce additional variability through differences in technology and interpretation of on-screen behavior. Examiner calibration and clear scoring rubrics become even more important to reduce inconsistencies.

Another consideration is fairness across candidates. Differences in internet quality, device setup, and environment can affect how candidates are perceived. While procedural safeguards such as pre-session checks can reduce these issues, they cannot fully eliminate them. As a result, fairness must be actively monitored and adjusted for where possible.

Despite these challenges, the core principles of OSCE design remain applicable. Multiple observations, structured scoring, and standardized cases continue to support defensible assessment decisions. However, the margin for error increases if these principles are not rigorously applied in the remote context.

Using remote OSCE in clinical training

Remote OSCEs are not limited to high-stakes assessment. They can also play a role in clinical training when used in a structured and intentional way.

In formative settings, remote OSCEs provide opportunities for repeated practice and feedback. Because logistical barriers are lower than in-person exams, educators can run more frequent sessions. This allows trainees to refine communication and reasoning skills over time, rather than relying on a single high-stakes evaluation.

They also support integration into hybrid curricula. Remote OSCEs can complement in-person training by focusing on competencies that align with telemedicine and digital communication. As healthcare delivery increasingly includes remote consultations, this alignment becomes more relevant for real-world practice.

Another advantage lies in scalability. Institutions can involve distributed faculty and simulated patients without requiring physical co-location. This expands access to diverse cases and perspectives, which can enrich training experiences. However, this scalability depends on maintaining consistent standards across all participants.

At the same time, remote OSCEs should not replace all forms of clinical assessment. Skills such as physical examination, procedural techniques, and real-time team interaction still require in-person evaluation. Therefore, remote OSCEs are most effective when positioned as one component within a broader assessment strategy.

When to use remote OSCEs and when not to

Remote OSCEs are most appropriate when the assessment focus aligns with competencies that can be reliably observed through video interaction. These include communication, clinical reasoning, and structured decision making. In situations where access to clinical environments is limited, such as during public health disruptions, remote OSCEs provide a viable alternative that allows training and progression to continue.

They are also suitable for distributed training programs. Institutions with geographically dispersed learners can use remote OSCEs to standardize assessment without requiring travel or centralized facilities. This reduces logistical constraints while maintaining a structured evaluation process.

However, remote OSCEs are less appropriate when the goal is to assess hands-on competence. Physical examination, procedural skills, and complex team-based interactions depend on direct observation in a clinical environment. Attempting to assess these remotely risks reducing the validity of the evaluation.

Another limitation arises in high-stakes decision making where defensibility is critical. Educators can design remote OSCEs to meet rigorous standards, but they must implement additional safeguards to ensure fairness and consistency. When teams cannot guarantee these safeguards, in-person assessment remains the more reliable option.

Ultimately, educators should choose a remote OSCE only when the method aligns with the competency they want to measure. When they use it appropriately, it expands the reach of clinical education. When they misalign it, it distorts what the assessment actually measures.

FAQ

What is a remote OSCE exam?

A remote OSCE is a structured clinical assessment conducted through video platforms where candidates interact with simulated patients and examiners online. It follows the same station-based format as traditional OSCEs but replaces physical movement with virtual transitions and digital scoring systems.

How does a remote OSCE work for students?

Students join a scheduled session, receive instructions, and rotate through virtual stations using breakout rooms. In each station, candidates complete a clinical task such as history taking or decision making while an examiner observes and scores their performance. Coordinators control timing and manage transitions between stations.

Are remote OSCEs as reliable as in-person OSCEs?

Remote OSCEs can achieve acceptable reliability when designed with clear scoring criteria, multiple stations, and trained examiners. However, they introduce additional variability related to technology and reduced observation of non-verbal behavior, which can affect consistency if not carefully managed.

What are the challenges of remote OSCE assessments?

Key challenges include limited assessment of physical skills, dependence on technology, reduced observational depth, and the need for adapted scoring criteria. These factors can influence both validity and fairness if not addressed through structured design and testing.

How do you prepare examiners for a remote OSCE?

Examiners require specific training on how to interpret performance in a video-based environment. This includes calibration sessions, review of scoring rubrics, and practice with sample recordings to ensure consistent interpretation of communication and clinical reasoning.

What technology is required to run a remote osce?

A remote OSCE requires a stable video conferencing platform with breakout room functionality, reliable internet access for all participants, and devices with audio and video capabilities. In addition, institutions typically use a learning management system or digital scoring tool to manage checklists and examiner input. Clear workflows, technical support, and pre-session testing are essential to ensure consistent delivery and avoid disruptions during the assessment.

Conclusion

Here is the rewritten version in active voice, tighter and more direct:

—

Remote OSCEs extend a well-established assessment model into a digital environment. Educators preserve the structured, station-based format while adapting delivery to remote interaction. This approach allows institutions to continue evaluating clinical competence when in-person assessment is not possible.

However, the change in medium affects what examiners can observe and measure. Examiners can still assess communication and clinical reasoning effectively. In contrast, video interaction limits physical examination and some aspects of professional behavior. As a result, remote OSCEs do not replicate traditional OSCEs. Instead, they redefine what the assessment can cover.

The effectiveness of a remote OSCE depends on design, not just technology. Educators must align competencies with observable behaviors, train examiners to score consistently, and enforce strong standardization across stations. When teams apply these principles, remote OSCEs can support both formative and summative assessment.

At the same time, educators should place remote OSCEs within a broader assessment strategy. No single method captures the full range of clinical competence. Remote OSCEs add the most value when combined with in-person assessments and other tools, so programs can evaluate both thinking and practical skills.

Bibliography

- Harden, R. M. (2016). Revisiting “Assessment of clinical competence using an objective structured clinical examination (OSCE)”. Medical Education, 50(4), 376–379. https://doi.org/10.1111/medu.12801

- Hodges, B. (2003). OSCE! Variations on a theme by Harden. Medical Education, 37(12), 1134–1140.

- Major, S., Sawan, L., Vognsen, J., & Jabre, M. (2020). COVID-19 pandemic prompts the development of a Web-OSCE using Zoom teleconferencing to resume medical students’ clinical skills training. BMJ Simulation and Technology Enhanced Learning, 6, 376–377. https://doi.org/10.1136/bmjstel-2020-000629

- Boursicot, K., Kemp, S., Ong, T. H., Wijaya, L., Goh, S. H., Freeman, K., & Curran, I. (2020). Conducting a high-stakes OSCE in a COVID-19 environment. MedEdPublish, 9, 54. https://doi.org/10.15694/mep.2020.000054.1

- Khan, K. Z., Ramachandran, S., Gaunt, K., & Pushkar, P. (2013). The objective structured clinical examination (OSCE): AMEE guide No. 81. Medical Teacher, 35(9), e1437–e1446.

- Boursicot, K., Roberts, T., & Burdick, W. (2018). Structured assessments of clinical competence. In T. Swanwick (Ed.), Understanding medical education (pp. 335–345). Wiley.

- Lockyer, J., Carraccio, C., Chan, M. K., et al. (2017). Core principles of assessment in competency-based medical education. Medical Teacher, 39(6), 609–616.

- Novack, D. H., Cohen, D., Peitzman, S. J., et al. (2002). A pilot test of WebOSCE: A system for assessing trainees’ clinical skills via teleconference. Medical Teacher, 24(5), 483–487.