Most examiners give feedback after an OSCE because the format asks them to. Few receive any training on how to do it well. The result is feedback that rarely lands. Not because examiners are unskilled, but because the conditions are almost optimally designed for poor retention. What follows is a set of techniques that work within those conditions rather than ignoring them.

Why OSCE Feedback Doesn’t Land

Three structural factors work against retention, and none of them are the examiner’s fault.

The first is cognitive load. A student coming off a high-stakes station is processing their own performance: replaying decisions, calculating what they missed, preparing for the next room. They are not primed to receive new information. Asking them to absorb feedback at this moment is asking them to add to a stack that is already full.

The second is emotional activation. In one study of medical students in Canada, approximately 29% reported an emotional reaction to OSCE feedback, most commonly embarrassment or anxiety. Feedback received in that state tends not to be reflected on. It is defended against. The student hears the critical point as an attack rather than information, and the examiner’s words do not reach the part of the mind that would act on them.

The third is time pressure. Most examiners have two to three minutes per station. That pressure pushes feedback toward the generic: “good communication”, “work on your history-taking”. Generic feedback cannot be acted on because it does not tell the student what specifically to replicate or change.

None of these are new problems. What has been missing is practical guidance for examiners on how to work within these constraints rather than around them. The five techniques below address each factor directly.

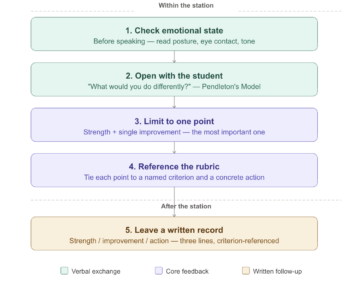

Technique 1: Check for Emotional Activation Before You Open

Most feedback frameworks assume the student is ready to receive. The research suggests otherwise.

Before delivering any feedback, take three seconds to assess the student’s state. Signs of emotional activation are visible: averted eye contact, a flat or clipped response to your opening question, a tight posture, or an immediate verbal defence of their performance before you have said anything evaluative. Any of these signals that the student is in a state where they will defend rather than absorb.

The adjustment is simple. Instead of moving directly into feedback, acknowledge the station first. “That was a difficult one” or “You covered a lot of ground in a short time”, is not flattery. It is a brief signal that you are not about to attack. Research on emotional responses to OSCE feedback found that specific examiner behaviours (eye rolls, audible sighs, a patronising tone) triggered emotional reactions that made the verbal content of the feedback irrelevant. The student stopped listening. The inverse is also true: a neutral, settled opening lowers the defensive response before the feedback begins.

This is not about being gentle with the improvement point. It is about ensuring the improvement point is actually heard. A student who is emotionally activated will not retain what you say regardless of how clearly you say it.

For a broader framework on reading nonverbal signals in clinical encounters, you should visit our blog on Nonverbal Communication in Healthcare.

Technique 2: Open With the Student, Not Your Assessment

Pendleton’s Model inverts the default feedback sequence: the student speaks before the examiner offers any evaluation. This is not just a structure for reducing defensiveness. It changes what the student retains.

Students who name the problem themselves tend to retain it better than those who receive the same observation passively. The examiner’s job in the first half of the exchange is to confirm and add precision, not to introduce the assessment from scratch.

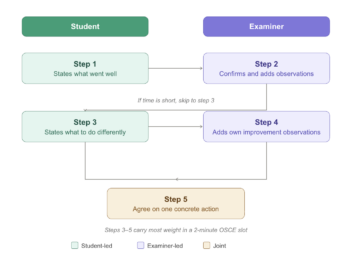

The full Pendleton sequence runs as follows:

- The student states what they felt went well

- The examiner confirms and adds what they observed went well

- The student states what they would do differently

- The examiner adds their own observations on what could improve

- Both agree on one concrete action for next time

In a two-minute OSCE slot, steps 3 to 5 carry the most weight. If time is short, go directly to “what would you do differently?” and build from the student’s answer. The examiner’s improvement point lands harder when it follows something the student has already named.

Pendleton’s Model is not universally appropriate. In summative OSCEs where the examiner role is explicitly evaluative, a student-led opening can feel incongruent with the context. Therefore it recommended to use it as a default for formative stations and adapt the sequence for summative ones.

For an overview of feedback frameworks used alongside Pendleton in healthcare education, see Implementing Feedback Models.

Technique 3: Limit Yourself to One Point

One specific observation, delivered well, produces more behavioural change than a comprehensive review delivered quickly.

The instinct to cover everything (strength, three areas for improvement, overall impression) is understandable. It feels thorough. But research on verbal OSCE feedback shows that students recall very little of what is said immediately after a station, and even less weeks later. A student who receives six observations retains fragments of two or three. A student who receives one targeted observation has something concrete to act on.

The practical rule: choose the single most important improvement point before the student enters the room. Everything else goes in the written summary (Technique 5). If you find yourself wanting to add a second point during the verbal exchange, ask whether it is genuinely more important than the first. If not, hold it.

What makes a point worth choosing:

- It is specific to what happened in this station, not a general pattern

- It maps to a criterion on the marking rubric

- The student can practise it before their next assessment

“Your communication was good but your history-taking needs work” fails all three tests. “You reached the diagnosis but did not ask about the patient’s ideas and concerns, which cost you marks on the communication criterion and is straightforward to add next time” passes all three.

Technique 4: Reference the Rubric Explicitly

Generic feedback fails not because examiners give it carelessly, but because it has no anchor. Tying every observation to a specific rubric criterion gives the student something to locate and act on.

In one study of written OSCE feedback, students rated access to the marking rubric as the most valuable element, above examiner comments and above scores alone. The rubric gives feedback a shared reference point. When an examiner names the criterion, the student knows exactly what to replicate or correct and where it sits in the assessment.

Three sentence structures that make this concrete:

Strength, criterion-referenced: “You checked the patient’s understanding after explaining the diagnosis. That maps directly to the communication criterion and you executed it well.”

Improvement, criterion-referenced: “The history ran long and you did not reach the management discussion. That cost you marks on the clinical reasoning criterion.”

Action, criterion-referenced: “Before your next OSCE, practise ending your history at the two-minute mark so you have time for the management plan, where the higher-weighted criteria sit.”

Each sentence tells the student what happened, where it sits in the assessment, and what to do about it. None requires more than thirty seconds to deliver.

One practical note: examiners who have not read the rubric recently before the station will struggle to reference it accurately. A one-minute rubric review at the start of each exam session is worth the time.

Technique 5: Leave a Written Record

Verbal feedback operates under the worst possible conditions for retention. Written feedback does not.

A student can return to a written note an hour later, the evening before their next assessment, or the week before finals. They cannot return to what you said in a two-minute verbal exchange at a station they were already processing under pressure. The formats are not equivalent, and treating them as interchangeable is a structural error in OSCE feedback design.

The format does not need to be long. Three lines are sufficient:

- Strength: one specific observation, criterion-referenced

- Improvement: one specific observation, criterion-referenced

- Action: one concrete thing to practise before the next attempt

In one study of surgical OSCE feedback, 87.5% of students and 91.6% of examiners agreed that written feedback should continue, including in summative settings. The key to feasibility is standardising the format before the exam so examiners are not writing from scratch at each station.

Written feedback becomes significantly more useful when students can review a recording of their own performance alongside it. The note tells them what to look for; the recording makes it concrete. Reading that you ran out of time before the management discussion is one thing. Watching it happen is another. Videolab is built around this principle: written feedback and video review used together, not as separate tools.

For context on when written feedback matters most relative to assessment type, see Formative vs Summative Feedback.

Where the Field Is Heading

The five techniques above operate at the level of the individual examiner. A 2024 paper in Medical Teacher proposes a complementary model that operates at the level of the institution.

The model uses readily available summative assessment data to calculate what the authors call 10% index scores, a measure of relative station difficulty and relative individual student performance across the cohort. These scores make it possible to generate individualised, actionable feedback for every student following a summative OSCE without requiring additional live examiner time at the point of delivery.

The practical implication: an institution could combine examiner-level techniques (the five above) with a data-driven written summary generated from assessment scores. The examiner handles the verbal exchange; the system handles the scaled written record.

The model is new. Uptake data and independent replication are not yet available. But it addresses the most persistent structural problem in OSCE feedback (scale) without requiring behaviour change across an entire institution. That makes it worth watching.

Putting It Into Practice

The five techniques work as a sequence, not a menu. Used together, a two-minute feedback slot looks like this:

Before the student sits down, read their state. If you see signs of emotional activation, open with a brief neutral acknowledgement before moving to assessment. Then ask what they would do differently. Their answer tells you whether they have already identified the key problem, and it reduces defensiveness before you introduce your own observation. Limit your verbal feedback to one point: the single most important improvement, tied explicitly to a rubric criterion, with a concrete action attached. Then hand them a written note with the same point (one strength, one improvement, one action) that they can return to when the cognitive load and emotional activation have passed.

None of this requires more time than most examiners already have. It requires a different allocation of the time available: less on comprehensive verbal review, more on one specific observation the student can actually use.

The longer-term question for any institution running OSCEs is not whether feedback is given (it almost always is) but what students are doing with it 48 hours later. If the answer is “very little”, the problem is structural, not motivational. These techniques address the structure. The data and the recording handle the rest.

Frequently Asked Questions

Why does OSCE feedback have poor retention?

Three structural factors work against retention: cognitive load (students are processing their own performance after a high-stakes station), emotional activation (approximately 29% of students report embarrassment or anxiety during OSCE feedback), and time pressure (most examiners have two to three minutes per station, which pushes feedback toward the generic). Together, these mean feedback is delivered at the moment students are least able to absorb it.

What is Pendleton’s Model and how does it apply to OSCE feedback?

Pendleton’s Model inverts the default feedback sequence: the student speaks before the examiner offers any evaluation. The student first states what went well, then what they would do differently, before the examiner adds their own observations. In a time-constrained OSCE, the most useful part is asking the student what they would do differently and building the improvement point from their answer.

How many feedback points should an examiner give after an OSCE station?

One. Research on verbal OSCE feedback shows that students recall very little of what is said immediately after a station, and even less weeks later. A student who receives one targeted, criterion-referenced observation has more to act on than one who receives a comprehensive rundown. Additional points belong in a written summary, not the verbal exchange.

Is written feedback after a summative OSCE feasible for examiners?

Yes. In one study of surgical OSCE feedback, 87.5% of students and 91.6% of examiners agreed that written feedback should continue, including in summative settings. The key is standardising the format before the exam, using a three-line structure covering strength, improvement, and action, so examiners are not writing from scratch at each station.

What is criterion-referenced feedback in an OSCE?

Criterion-referenced feedback ties each observation directly to a named item on the marking rubric. Instead of “your communication was good”, the examiner states what specifically the student did and which criterion it maps to. Research shows students rate rubric access as the most valuable element of written OSCE feedback, above examiner comments and scores alone, because it tells them exactly what to replicate or correct.